METHODOLOGY

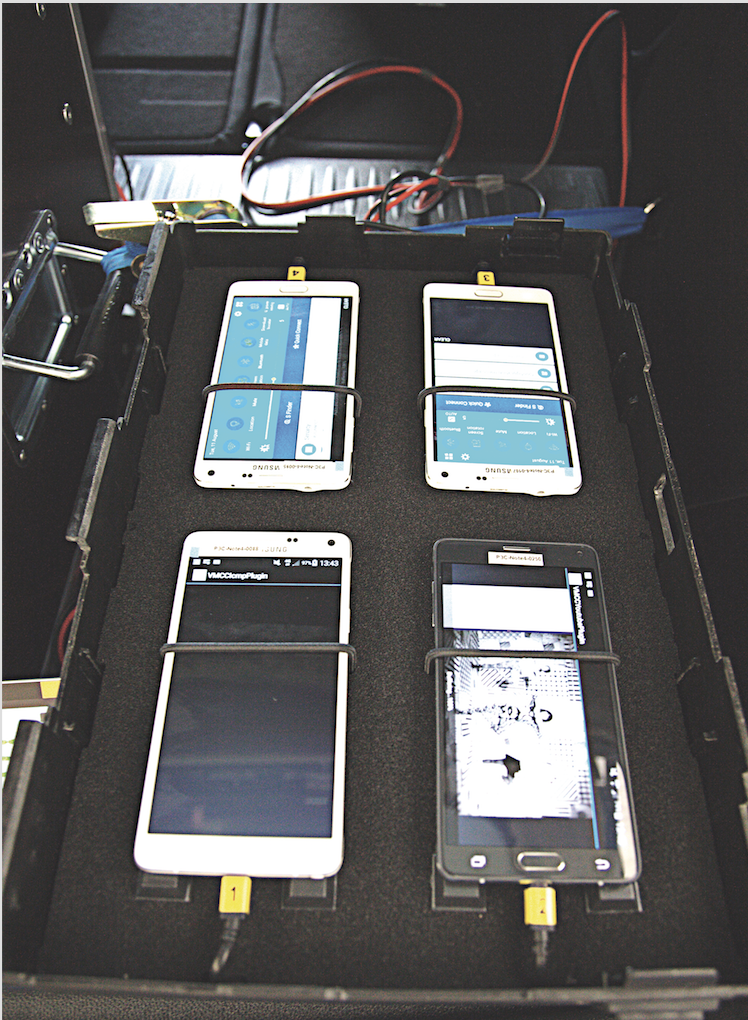

As in previous years, connect’s partner for the network measurements, P3 communications, used two vehicles to test drive the chosen cities, towns and roads. In Germany and Austria each car carried six Samsung Galaxy S5 smartphones to measure voice services and three Samsung Galaxy Note 4 performing the data service tests. In order to reflect the advanced roll-out of LTE with “3 Carrier Aggregation“ (the combination of three carrier frequencies) in Switzerland, we used three Samsung Galaxy S7 for the data measurements there. The same setup of devices was utilized in the walk tests. For this effort, the smartphones were installed in trolleys and backpacks with additional batteries.

The devices’ firmware was each operator’s current firmware version. If such software was not available the most current firmware from Samsung was used.

Voice telephony

Voice services were measured with the smartphones performing calls alternating between the two measurement cars (“mobile-to-mobile“). An additional car served as a mobile remote station for the calls of the walk test teams.

Background data traffic was transmitted by one of the smartphones simultaneously to each call to reflect a realistic usage scenario. Audio quality was assessed by using POLQA (Perceptual Objective Listening Quality Assessment) wide band scoring.

All devices were configured in “LTE preferred” mode. Thus in the three German Networks as well as with A1 in Austria and Swisscom in Switzerland, the modern Voice-over-LTE (VoLTE) service could be used. Within networks not yet supporting VoLTE, the smartphones were forced to switch to 3G or 2G technology, the so-called circuit- switched-fall-back (CSFB).

Data connectivity

To assess cellular data performance a sequence of tests were executed. As a dynamic web-browsing test, each country’s top web sites (according to the Alexa ranking) were downloaded in the so-called live web-browsing test. Additionally a static web site was tested, the industry standard ETSI (European Telecommunications Standards Institute) “Kepler“ reference page. HTTP downloads and uploads were performed with 3 MB and 1 MB files, simulating small file transfers. The networks’ peak performance was tested with a ten second download and upload of a single, very large file.

The Youtube measurements considered the new “adaptive resolution“ feature of this video platform. In order to offer a persistent video experience, Youtube adapts the video streams‘ resolution dynamically to the bandwidth that is currently available. Our scoring therefore considers the success ratio, the time until the playback starts, the percentage of video playouts that take place without interruptions as well as the videos‘ average resolution or line number count respectively.

Indoor and train measurements

The walk tests consisted of the same tasks as were performed in the cars. For this effort two teams measured in public transport and in public places, like coffee shops, museums, train stations and airport terminals. Travelling from city to city by public transport allowed the assessment of cellular network quality within the long distance trains.

Logistics

The tests were performed in Austria, Germany and Switzerland around the same period of time (Germany: October 21 – November 12; Austria: October 7 – 27; Switzerland: October 14 – November 1). All measurements were done between 8 AM and 10 PM. Both cars were always in the same cities, but on different routes to avoid any interference of one car’s measurement by the other car’s. Both vehicles followed a given route, including fixed location measurements at “areas of interest” such as well-visited public places. Measurements there lasted one hour. Locations such as train stations, airports, much-frequented public parks or high-density urban areas typically demonstrate how networks respond when a high number of users compete for their share of bandwidth within the network’s available radio frequencies.

The measurements included 17 larger cities and 26 smaller towns in Germany, while the walk tests frequented six cities. In Austria the drive tests covered 11 big cities and 20 smaller towns, the walk test team visited five cities. In Switzerland, the test route included 13 big cities and 20 smaller towns with the walk tests conducted in four cities. Travel between the cities mainly used highways, but smaller state and county roads were driven as well. For each connect test P3 communications follows a well-defined process to generate four independent and representative city and route plans. The connect editors choose randomly one of these four alternatives.

Test efforts and results

Overall 25,000 km were driven for the connect P3 mobile network test in 2016. In Germany the approximately 12,100 km of driven routes alongside the cities and areas visited represent 13.4 million inhabitants, equaling around 16.7 per cent of Germany’s population. Austria was measured by driving 5,900 km covering about 3 million inhabitants (approx. 36 per cent of the Austrian population). In Switzerland, the test teams drove approx. 7,000 km, covering 1.9 million people representing around 22.5% of the Swiss population. Certainly a huge effort, but necessary to gain the required statistical relevance and confidence in the test results.

Scoring

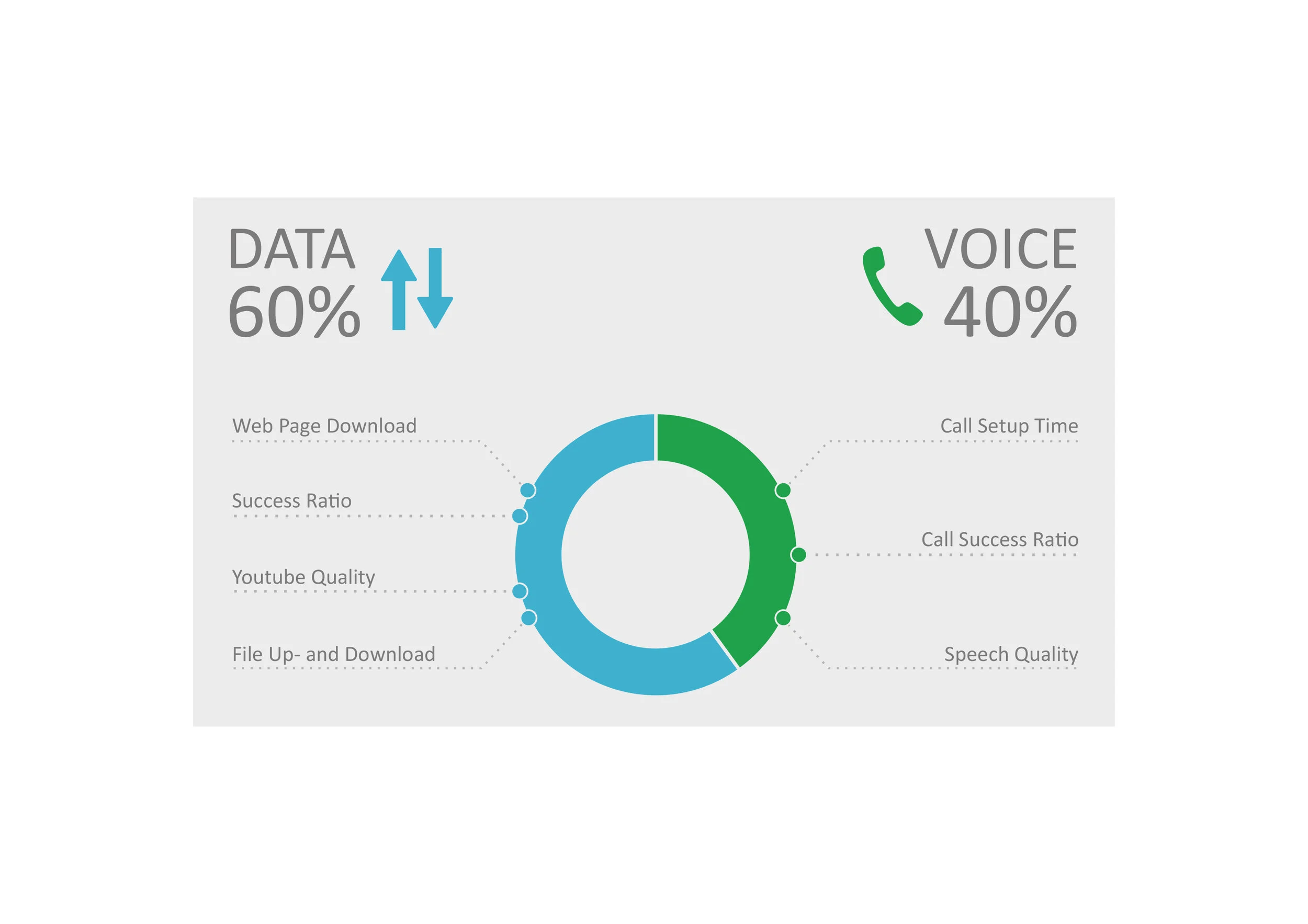

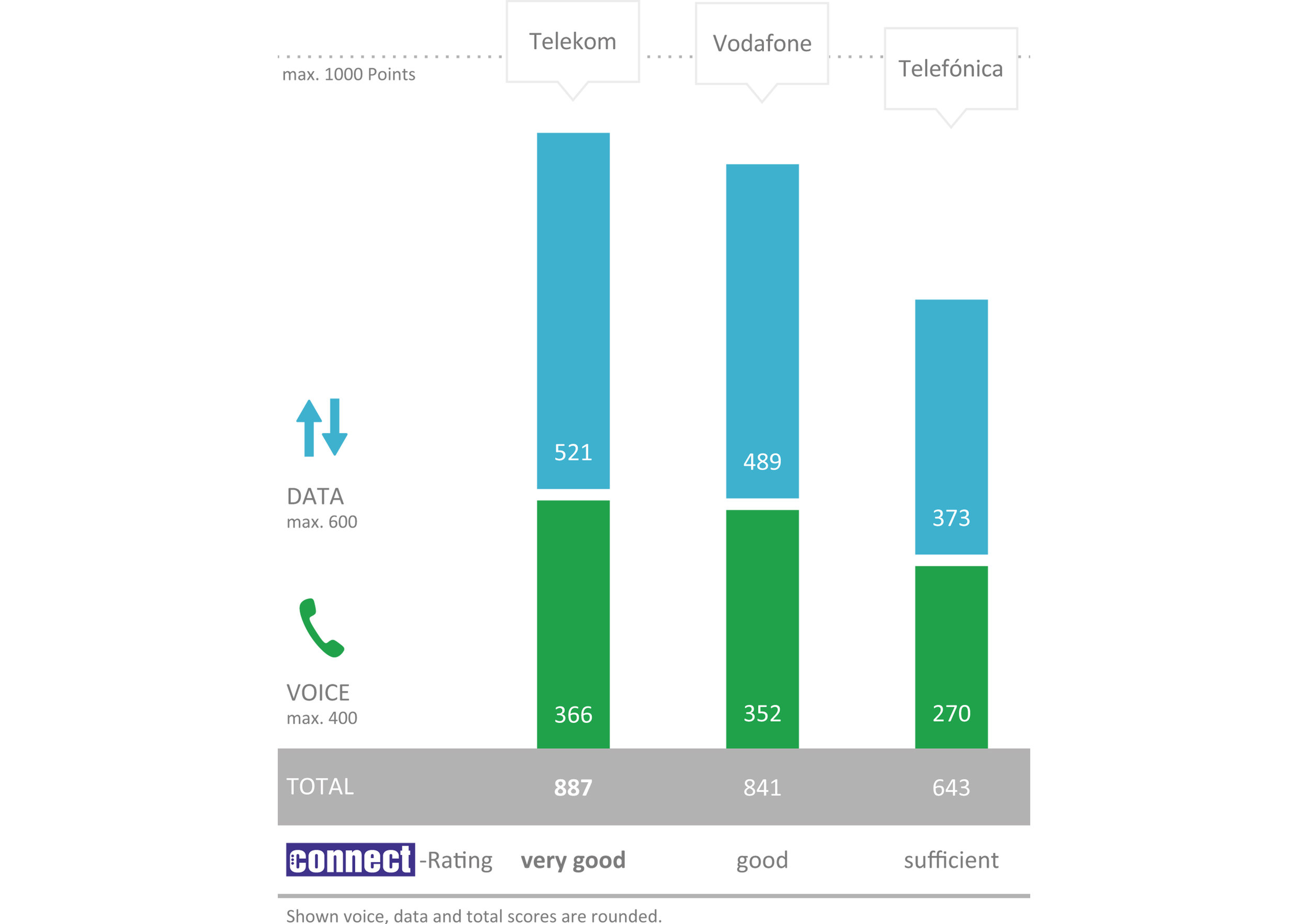

The results of the voice test contribute 40 per cent of the total score, those of the data tests make up 60 per cent. For the overall result we apply a 1000 point scheme in order to represent sufficiently detailed results. Moreover this scheme allows us to better compare the results of network tests that we have conducted in different countries.

Professional and critical: Bernd Theiss, head of test and technology at connect (on the left), and Hakan Ekmen, managing director of P3 communications (on the right).

Each box was housing four smartphones which allowed the simultaneous testing of four mobile operators.

Three boxes were mounted into the back and side windows of each measuring car in order to support twelve smartphones per car.

O2 and E-plus

Here are the reasons why we tested and evaluated the merging networks of E-Plus and O2 as a single O2 network.

After Telefónica/O2 bought out its former competitor E-Plus in October 2014, the merger of both networks goes at full speed. Previous E-Plus customers are being transferred to O2 tariffs, and Telefónica must sell off some base stations that have been occupied by both operators according to the German regulatory authority. The remaining base station sites are already designated “O2“. Cells formerly belonging to E-Plus are no longer visible as a discrete mobile network. Instead, at the moment there are “old“ O2/E-Plus cells along with “new“ ones.

Given this situation, connect and P3 decided to only examine O2 in their network test that we conducted in late 2016. As we know from our readers and from our own experiences, difficulties definitely occur in the course of the network merge. These problems that include failing handovers between two differently configured network cells, are clearly recognized in the results of this year‘s P3 connect mobile network test.

Fairness and transparency

This year, some of the candidates massively tried to influence the conditions and parameters in the run-up of our test. The connect and P3 staff responsible for the testing project have of course fended off these attempts.

As in previous years, connect and P3 met in early 2016 in order to define the conditions and parameters for this year‘s network test. In this preceding test design phase, we for example identify new test criteria, discard or confirm old ones and determine their influence on the overall score. We define the timeframe as well as a preselection of smartphone models that we intend to use for the measurements. We then communicate these preliminary definitions in advance to the CTOs of the network operators.

Feedback is appreciated

In this process we appreciate feedback about aspects like suitable tariffs that facilitate unobstructed measurements of the best performance possible. After all, our objective is to evaluate the network experience of the most demanding customers. We also agree on the firmware versions used in the measurement smartphones, as each mobile network operator makes adjustments to most popular devices to ensure a smooth interplay with their network.

But this time, some contenders apparently took part in the discussions with the single intent to enforce measurement conditions that would favour their own network. For example, there have been attempts to impose a smartphone model on us that all in all works less reliably than others – presumably because the involved provider expected an advantage for its own network from this.

One operator insinuated flaws in the test design more than once – until extensive measurements conducted both by P3 communications as well as by the connect test lab disproved all of them. Permanent changes in the reasonings of some operators led connect to the assumption that one or the other of them would not have minded blowing the rapidly approaching deadline of our test.

The more danger, the more honour

We cannot help but understand such attempts as a compliment for the high relevance that the operators assign to our test. And of course we remain true to ourselves concerning these issues. After all, it is our standard to conduct a test that provides deep insights into the quality and performance of the examined mobile networks.

However, we will draw one conclusion from this year‘s experience: In the future, we will publish obvious attempts to abuse our transparent approach to testing the very same way as we document our test procedures.

Historical Development

Looking back at the results of the connect network tests since 2010 provides especially one insight: despite of the constantly rising requirements, the level of the overall results has steadily improved. We reckon that our demanding and well renowned network test is not entirely blameless.

Customers‘ expectations are constantly growing – expanding data volumes and rising transmission speeds are regarded to be absolutely normal. P3 and connect take account of this development by constantly raising the requirements and thresholds of our tests.

Network test as a driving force

The adjoining glance at the development of results in Germany, Austria and Switzerland in recent years shows a clear overall tendency: Despite the growing requirements, all tested networks improved steadily. In all modesty, we believe that the high relevance and challenging demands of our annual network tests are an important driving force of this development.

Hannes Rügheimer, connect author

conclusion

The operators enthusiastically fight for the top rank in the connect network test. The fact that almost all candidates managed to improve in spite of the rising requirements is clearly supporting our claim that our critical tests contribute to the overall enhancement of the mobile networks’ quality.

Against this background, the repeated test victory of Deutsche Telekom in Germany was by no means self-evident. It rather reflects the considerable efforts that Telekom takes in order to maintain and extend its network. Vodafone also worked flat out, but remains on the second rank. O2’s result shows some room for development but can be explained by the ongoing integration with the former E-Plus network.